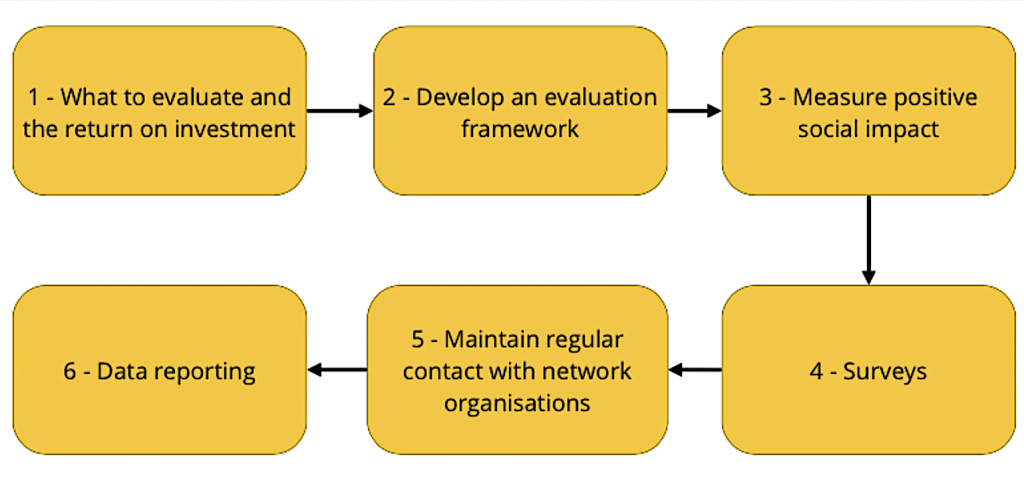

Evaluating a digital inclusion programme – step by step

Funders will want to know the impact of their investment, and you’ll want to know what’s worked well and not so well. If you are unsure where to start, help is at hand, in the form of this step by step guide to evaluating a digital inclusion programme, written by experts in the field. You can also read a case study on how 100% Digital Leeds evaluated their digital inclusion programme.

We’d also really like to hear what’s working for you to help us add to our library of case studies.

Six steps to evaluating a digital inclusion programme

1 - What to evaluate and the return on investment

Digital inclusion is a means to an end, it is not an end in itself. Digital inclusion is not about digital, it is about inclusion. People can use the internet to tackle many of the challenges and inequalities they face on a daily basis.

Being online means having access to:

- cheaper goods

- services and utilities

- more employment opportunities

- self-management tools for long-term health conditions

- easier ways to deal with the council and government departments

Think about how you could use these metrics and more to evaluate how successful your work has been. For more inspiration, take a look at the 100% Digital Leeds evaluation case study and see how they decided what to evaluate.

2 - Develop an evaluation framework

Think about creating a sustainable evaluation framework to measure the programme’s:

- return on investment

- social impact

- progress towards 100% digital inclusion

If you do not have the in-house skills to do this, think about partnering with an organisation who could help you. For example, 100% Digital Leeds partnered with the Good Things Foundation to design their model, drawing on that organisation’s expertise as a national leader in the research and evaluation of digital inclusion programmes.

Your evaluation framework should allow you to measure improved outcomes across a range of indicators. It should also give you a methodology that you can use to report the return on investment that digital inclusion brings to residents, the council and the area as a whole.

4 - Surveys

The framework could also include surveys to evaluate impact.

Ongoing user progression surveys

Ongoing user progression surveys collect demographic data to measure trends and build a profile of end users. For example:

- age

- employment status

- disabilities and long term health conditions

- language needs

The survey can also include attitudinal and behavioural change, and calculation of channel shift savings. This survey can be rolled out to any partners who offer digital access and support to users.

Monthly activity surveys

Monthly activity surveys are designed to collect data to provide quantitative evidence of the impact delivered by partners in communities and among target audiences.

This is so that you can state, for example, “x number of organisations in the movement are helping people find employment and saw y amount of people this quarter.”

It’s recommended you visit each organisation quarterly to collect qualitative evidence in the form of user case studies and organisational case studies, featuring quotes and images. These can be organised in categories relevant to specific agendas, for example:

- health

- employment and skills

- financial resilience

- community integration

- reduced isolation or loneliness

- greater independence

Ongoing digital champions progression survey

Digital champions work in communities, advocating digital practices. Progression surveys can monitor the:

- effectiveness of digital champions training

- impact of the practical application of training

- engagement of end users by digital champions, which will also be measured and reported

5 - Maintain regular contact with network organisations

In addition to these surveys for the more engaged organisations, you should continue to maintain quarterly contact with less engaged organisations in your network. This should help to identify any support they might need to boost their progress and engagement with end users.

6 - Data reporting

Once you have gathered all your data, there are a number of things you should then be able to report on:

- the number of overall organisations, online centres, tablet lending participants, digital champions, demonstrating the reach and visibility of the programme

- the nature of the digital inclusion activity of organisations and digital champions

- the number of end users benefiting, plus their demographic data

- the profile of individuals benefiting from the programme in the form of case studies

- the profile of organisations benefiting from the programme in the form of case studies

- channel shift savings to public services (NHS, JobCentre Plus, other local/national government departments)

As people move to online transactions to replace phone calls and visits, cost savings can be applied to those behavioural changes. For more information on the savings that can be achieved, please read the 100% Digital Leeds evaluation case study.